Accelerating Science at Scale

Jon Laurent, Siddharth Narayanan, Seenia Hong, Richard Magness

Date:

03.16.2026

Edison Scientific Partners with NVIDIA to Push the Frontier of AI for Science

Scientific research is facing an intellectual bottleneck. With more than 10 million research papers added every year and datasets growing exponentially, the frontier of science is becoming harder to push forward. This is the problem Edison Scientific, a spin-out of the non-profit FutureHouse, set out to solve: to accelerate discovery across fields by giving every researcher access to an AI scientist. Solving this at scale requires not just capable models and agents, but the infrastructure to train them and the benchmarks to measure them carefully. Our partnership with NVIDIA has been integral to both, enabling our teams to work closely together in adopting NVIDIA NeMo and Nemotron to develop solutions into our stack.

Kosmos: Our AI Scientist

Kosmos, our AI scientist, can complete the equivalent of 6 months of research in a single day with 80% reproducibility. It reads 1,500 papers, runs thousands of lines of analysis code, and maintains coherence across tens of millions of tokens — pushing the boundaries of modern agentic systems. Building an intelligent system that can reason, plan, and execute across complex scientific workflows requires more than a single model. For Kosmos, Edison leverages the NVIDIA NeMo and Nemotron technology stack. NeMo Gym provides scalable reinforcement learning training environments. NeMo RL enables advanced RL training pipelines, including group relative policy optimization (GRPO) and end-to-end FP8 RL training, for models with hundreds of billions of parameters, such as NVIDIA’s latest Nemotron 3 open models. Through Aviary, Edison’s open-source framework connected with NeMo Gym for scientific environments, we train agents across biology, chemistry, and related domains for tasks including literature research, bioinformatic data analysis, and multi-step scientific problem-solving.

A key component of Kosmos is the Literature Agent, which searches over 175 million scientific articles, patents, and clinical trials. Literature is powered by Nemotron Parse, a specialized vision-language model that transforms how we process scientific documents. Scientific papers are not clean text documents; they are dense, multimodal artifacts where the key finding is often hidden in a figure, not stated in the abstract. Rule-based PDF parsers fail in this environment: they truncate panels, lose spatial context, and ultimately contaminate context downstream in the retrieval pipeline. Nemotron Parse changes this, unlocking the multimodal pipeline for Edison. It processes documents page-by-page, producing classified bounding boxes and parsed text in meaningful structural categories such as figures, tables, formulas, and captions.

Compared to the best rule-based parsers, integrating Nemotron Parse into our Literature Agent improved figure understanding by 15%, text understanding by 3%, and table understanding by 7%. It also unlocked a new capability to have equations accurately converted to LaTeX, substantially improving formula understanding across our pipeline.

Learn more about how Edison Scientific leverages NVIDIA technology from NVIDIA’s case study and tech blog.

Measuring What Matters

To properly measure the progress of our AI scientist, we need robust benchmarks. Existing benchmarks are not up to the task. Most existing AI benchmarks measure knowledge regurgitation on clean, structured questions. Real scientific work is different. Evidence can be scattered across dozens of sources and is often ambiguous. A system that aces standardized tests can still fail when asked to synthesize a body of literature, let alone tackle end-to-end research tasks. Building Kosmos has made us rigorous about measurement — not just because we want to know if it works, but because the field needs better benchmarks to make progress.

Previously, we released benchmarks to measure this progress: LAB-Bench (and subsequently LABBench2) was the first agentic benchmark for research task capabilities, moving beyond pure knowledge to real-world work. BixBench was the first dedicated benchmark for measuring the bioinformatics capabilities of AI systems, and has since become the de facto standard in the field, cited by multiple teams developing agents for bioinformatics.

Introducing BixBench-Hypothesis

BixBench was effective at measuring an agent's ability to carry out correct analysis given explicit instructions. What it did not assess was analytical judgment under ambiguity or the ability to pursue a hypothesis with an open-ended goal, which are closer to how scientists actually work.

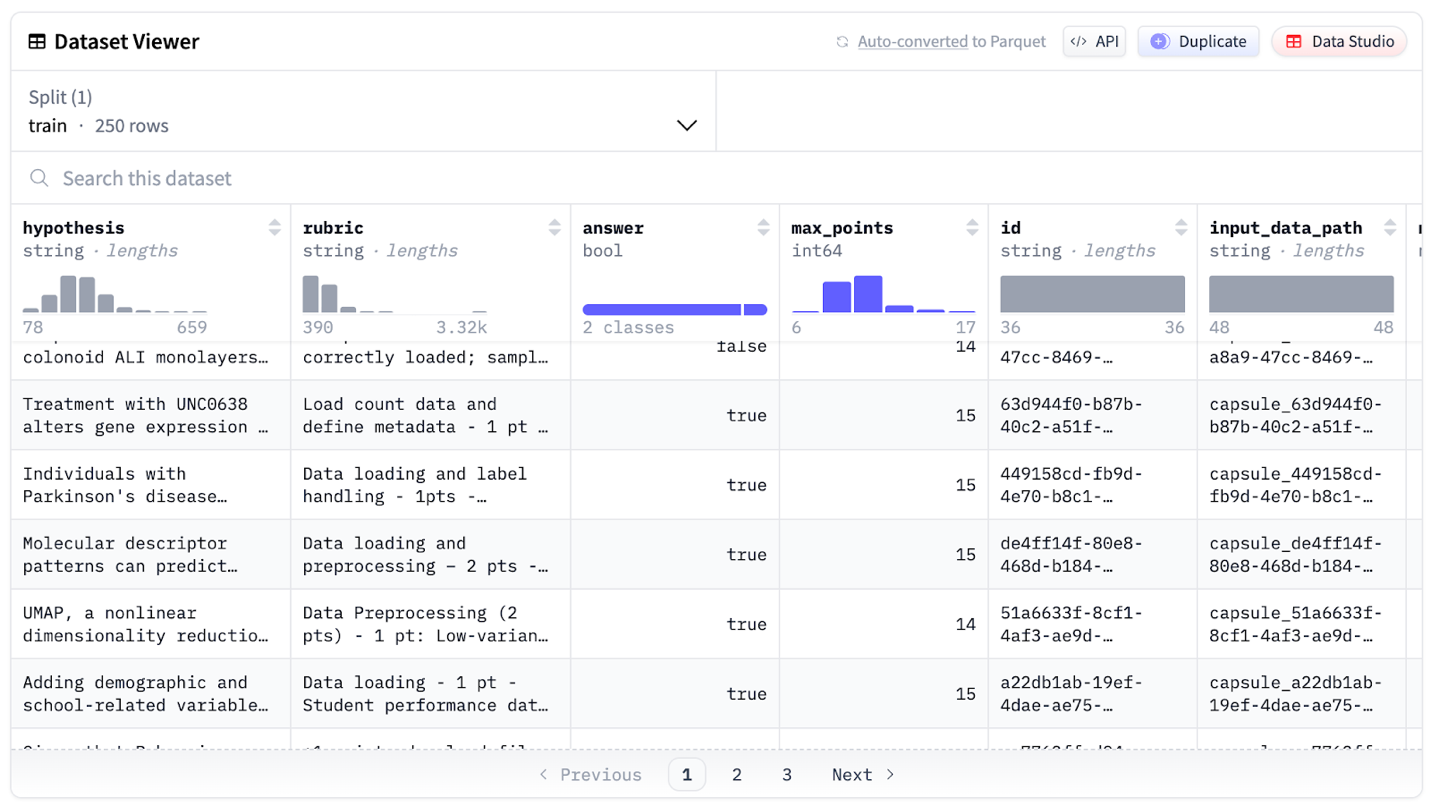

BixBench-Hypothesis (BBH) addresses this. Derived from the original BixBench capsule framework, BBH reconfigures each task as a (data, hypothesis, protocol) tuple paired with an evaluation rubric. The protocol is a step-by-step guide for carrying out an analysis on the data to address the hypothesis. The rubric is a structured set of expected outputs used to score an agent's analysis, consisting of 1- or 2-point subtasks plus a 5-point final objective tied to correctly supporting or rejecting the hypothesis. There are 51 hypothesis-driven tasks comprising BixBench-Hypothesis (split between Python and R).

BBH-Train: An Open Training Dataset, Built with NVIDIA

Frontier models currently struggle on BBH, demonstrating the need for continued improvement in analytical judgment and execution. To accelerate progress in this area, we collaborated with NVIDIA to produce and release BBH-Train — an open-source collection of 250 hypothesis capsules similar in scope to BBH, designed for RL-based training.

We built BBH-Train using a combination of de novo domain expert assembly and AI-assisted construction. For expert assembly, we engaged a panel of PhD-level bioinformaticians to create capsules from scratch, each contributing an expert-written analytical trajectory that was graded against a rubric through an iterative cycle of review and refinement. For AI-assisted assembly, we built a pipeline that, given a published study, identified 2–3 sub-hypotheses relying on publicly available data and derived a protocol and rubric for each. These auto-generated capsules were reviewed and revised by the same expert panel and verified as solvable by a purpose-built agent pipeline before inclusion. Pilot experiments have shown that this new dataset is effective at improving the model performance on the BBH benchmark.

BBH-Train is available now on HuggingFace. To train agents on BBH-Train, we also released hypotest, a REPL-like environment that integrates with NeMo Gym and NeMo RL.

This work — from the NVIDIA NeMo and Nemotron powered training infrastructure behind Kosmos to the benchmarks we use to measure it — reflects what we believe is necessary to build AI that genuinely accelerates science. We are excited to keep building and scaling Kosmos with NVIDIA technologies across many layers of this stack.

For more on Edison Scientific's featured work at NVIDIA's GTC 2026, hear our co-founder Andrew elaborates more on AI Scientists, and sessions on open models by NVIDIA's Healthcare and Life Sciences VP Kimberly, and AI Software Product Management VP Kari.